ZeroClaw: The Lightweight AI Runtime That Is Truly Portable

In recent years, the world of AI assistants and agentic workflows has moved extremely fast. But behind many impressive demos, one practical issue appears again and again when systems start being used in the real world: the runtime is heavy, complex, expensive to operate, and hard to move. Many modern AI stacks feel fine on powerful laptops or generous cloud servers, but become inconvenient once they need to run on smaller machines, tighter environments, or cost-sensitive deployments.

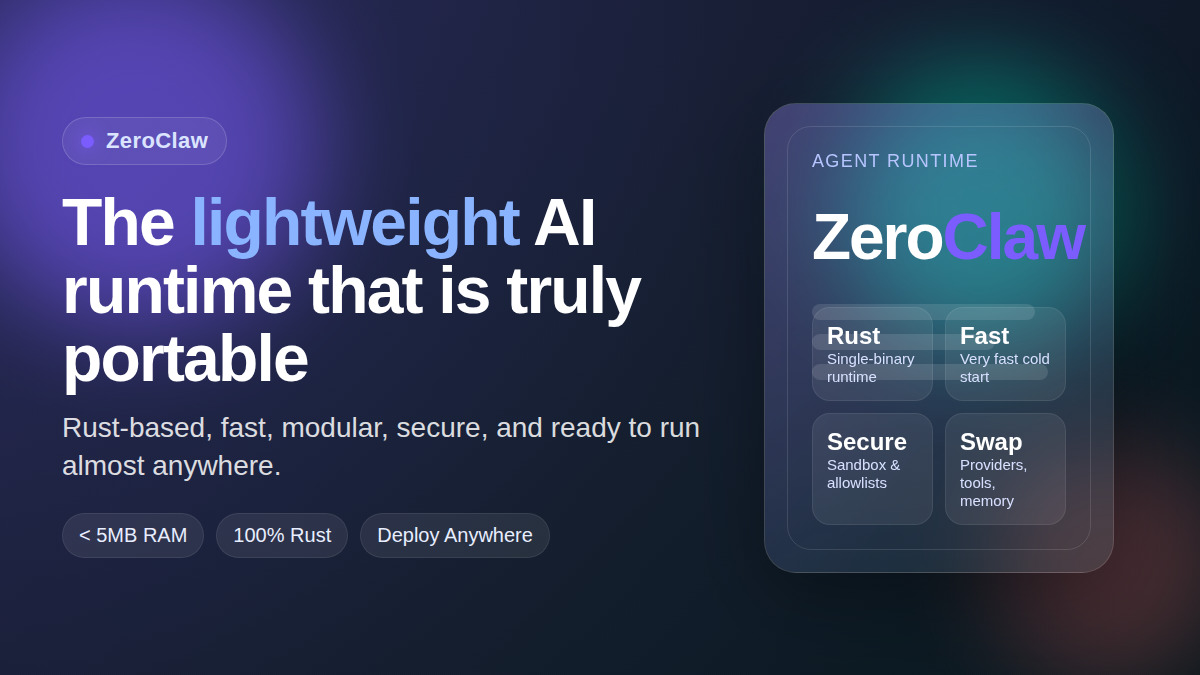

This is exactly where ZeroClaw becomes interesting. The project positions itself not merely as a chatbot or a ready-made AI app, but as a runtime framework for agentic workflows. Its focus is not only on making agents “able to answer,” but on providing an infrastructure foundation where models, tools, memory, channels, and execution can be assembled in a way that is lightweight, secure, modular, and portable.

If summarized in one sentence, ZeroClaw makes an ambitious but highly relevant promise: build once, run anywhere for agentic workflows—with a resource footprint that is far smaller than many mainstream alternatives.

What Is ZeroClaw?

According to its official repository, ZeroClaw is a Rust-based runtime framework for building and running agentic workflows. It is designed so that core system components—such as model providers, channels, tools, memory backends, and tunnels—are swappable without forcing a complete architectural rewrite.

That design choice matters. Many AI systems appear flexible on the surface, but are actually tightly coupled to one model vendor, one deployment pattern, or one integration style. ZeroClaw takes the opposite direction: it tries to keep the runtime layer agnostic, so developers retain more control over the systems they build.

In practical terms, ZeroClaw is best understood not as a single end-user product, but as an execution foundation for AI assistants, agent workflows, lightweight automation runtimes, and orchestration systems that need to stay fast and efficient.

Why ZeroClaw Deserves Attention

There are several reasons why ZeroClaw stands out.

1. Lightweight is not just marketing

The official repository emphasizes that ZeroClaw is designed to run common workflows in a sub-5MB RAM envelope on release builds. Whatever benchmark methodology is behind that number, the direction is very clear: ZeroClaw is trying to minimize runtime overhead as aggressively as possible.

That matters because many AI tools are burdened not only by the model itself, but also by the framework layer, interpreter runtime, dependency tree, and surrounding helper processes. Once that overhead grows, deployment costs rise and hardware choices shrink.

2. Rust creates operational advantages

Because ZeroClaw is built in Rust, it benefits from several very practical strengths:

- extremely fast startup,

- a portable single-binary deployment style,

- low memory usage,

- simpler operational packaging,

- and stronger control over performance and safety.

In the context of an agent runtime, this is not merely a language preference. It directly affects day-to-day operations: fewer heavyweight dependencies, better cold starts, and more realistic deployment options for small servers, low-cost boards, or edge hardware.

3. Trait-driven and pluggable architecture

One of the project’s most important ideas is its trait-driven architecture. Core capabilities are modeled as abstract contracts that can be implemented and replaced. This gives the system a high degree of flexibility for swapping model providers, communication channels, tools, or memory backends.

For developers, that means a healthier long-term architecture. You are not forced to build everything around a single vendor or one fixed stack. If you need to move to another model endpoint, redesign your toolchain, or change the interaction layer later, the cost of change can be much more manageable.

4. Secure-by-default matters more than it seems

ZeroClaw highlights pairing, strict sandboxing, explicit allowlists, and workspace scoping as part of its security posture. That is highly relevant because agentic systems are not passive text generators; they are systems that can perform actions.

The more power an agent has over files, tools, network access, or command execution, the more important it is to design guardrails at the runtime level. A fast framework with weak safety boundaries can become a liability. That is why ZeroClaw’s secure-by-default direction deserves serious attention.

ZeroClaw’s Place in the Agentic AI Landscape

ZeroClaw is interesting because it does not compete primarily at the level of a simple “AI chat app.” It is much closer to the runtime and infrastructure layer. So the key question is not “can ZeroClaw chat?” but rather: “can ZeroClaw act as a small, robust execution engine for agents with low overhead and high flexibility?”

For many real-world teams, that is exactly the right question. What they often need is:

- a runtime that starts quickly,

- a small footprint for low-cost VPS or tiny boards,

- an architecture that avoids vendor lock-in,

- swappable providers, tools, and channels,

- and a reasonable security foundation for agent execution.

In that context, ZeroClaw offers a distinct value proposition compared with projects that focus primarily on interface polish or chat UX.

The Most Interesting Features

From the official README, several features stand out.

Lean runtime by default

Common CLI and status workflows are designed to fit within a few megabytes of memory. That opens the door to much more economical deployment patterns.

Fast cold starts

Because ZeroClaw uses a single-binary Rust runtime, command and daemon startup can be extremely fast. That matters for automation hooks, daily CLI use, and systems that are started frequently.

Portable architecture

The project emphasizes a binary-first workflow across ARM, x86, and RISC-V. That makes it relevant not only for mainstream laptops and servers, but also for alternative hardware and edge scenarios.

Swappable everything

Providers, channels, tools, memory systems, and tunnels are designed to be pluggable. This is one of the project’s most important architectural promises.

Migration path from OpenClaw

ZeroClaw also provides a migration path from OpenClaw, including import of agents, memory, and configuration. This shows that the project is not only proposing a new vision, but also thinking about practical onboarding from an existing ecosystem.

Why the “Deploy Anywhere” Narrative Matters

Many AI projects claim to be flexible, but the operational reality is often different. Some are flexible on a developer laptop, but not on a small VPS. Some run in the cloud, but are too heavy for low-cost hardware. Some look modular in the documentation, but become painful to move because of implicit dependencies.

ZeroClaw tries to address this problem at the root: reduce runtime overhead, keep deployment binary-first, preserve modularity, and make core subsystems replaceable. If that philosophy remains consistent as the project grows, ZeroClaw could become a very strong foundation for pragmatic agent runtimes.

What Should Still Be Viewed Critically

Even though the project is promising, several things should still be evaluated with healthy skepticism.

1. Benchmarks always need context

Numbers around RAM, startup time, and comparison tables are interesting, but they should be read as snapshots from the project’s own methodology. Real evaluation should still be done against your own workloads, hardware, and operational patterns.

2. A lightweight runtime does not automatically mean a mature ecosystem

Lightweight engineering is an important advantage, but long-term success also depends on documentation quality, API stability, extension points, and community momentum.

3. Architectural flexibility must be matched by good developer experience

A modular system can be powerful, but if it becomes too abstract or too low-level, onboarding may feel difficult for some users. Documentation and DX still matter a great deal.

When Is ZeroClaw a Good Fit?

ZeroClaw is especially compelling for scenarios such as:

- building resource-efficient agent runtimes,

- running AI assistants on small devices or edge hardware,

- creating vendor-agnostic agentic systems,

- deploying workflows that benefit from very fast cold starts,

- or experimenting with AI infrastructure where portability and efficiency matter.

It is also attractive for developers who want to control the runtime layer, not just use a finished application.

On the other hand, if your main need is a highly mature team dashboard, full enterprise workflow management from day one, or an all-inclusive no-code experience, ZeroClaw may not be the only layer you need. You may still want an additional application-layer system on top.

Conclusion

ZeroClaw is an exciting project because it does not merely promise a faster AI assistant. It offers something more fundamental: a small, fast, secure, and portable runtime framework for agentic systems. With its Rust foundation, pluggable architecture, and strong emphasis on operational efficiency, it presents a refreshing alternative in an AI ecosystem that often becomes heavier and more locked-in over time.

Its biggest value is not just the claim that it is “faster” or “lighter,” but the design direction behind it: giving developers more freedom to build flexible agentic systems without paying a large runtime tax from the beginning.

If the future of agentic workflows keeps moving toward more practical, distributed, and cost-conscious deployments, then ZeroClaw is absolutely a project worth watching closely.